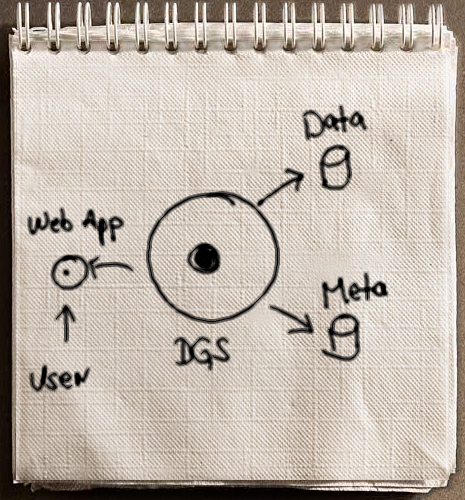

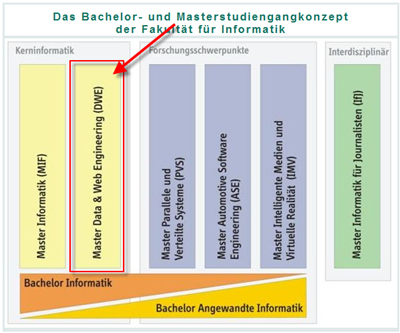

The last few evenings I spend writing an extension concept for the WebComposition/DGS approach. Initially, I was looking for a semantic description for the RESTful interface of the DGS. There are plenty approaches and a lot of research is going on for extending the WSDL interfaces with semantics. OWL-S and WSMO might be the most important examples within this field. However, it appears to be a bit more tricky for REST-driven approaches. For the DGS, I decided to try the SA-REST approach of the Kno.e.sis Services Science Lab of the Wright State University, Ohio.

<rdf:Description rdf:about="http://localhost/datagridservice/DataGridService">

<SAREST:input rdf:resource="http://lsdis.cs.uga.edu/ont.owl#Location_Query" />

<SAREST:output rdf:resource="http://lsdis.cs.uga.edu/ont.owl#Location" />

<SAREST:action>HTTP GET</SAREST:action>

<SAREST:lifting rdf:resource="http://www.restful.ws/lifting.xsl" />

<SAREST:lowering rdf:resource="http://www.restful.ws/lowering.xsl" />

<SAREST:operation rdf:resource="http://lsdis.cs.uga.edu/ont.owl#Location_Search" />

</rdf:Description>

However, I did not want to make this as a internal component of the DGS as is a research project, and no recommendation or standard is out there yet. So I came along with the extension concept of the DGS that allows dynamically loading of additional extensions in addition to the core components of the DGS.

The IExtension interface provides hotspots were own code can be executed right before and after one of the CRUD (Create, Read, Update, Delete) events.

namespace WebComposition.Dgs.Extension

{

public interface IExtension

{

bool CreateStartUp(ref ExecutionContext context);

bool CreateTearDown(ref ExecutionContext context);

bool ReadStartUp(ref ExecutionContext context);

//...

}

}

The ExecutionContext contains the request URI, the corresponding data adapter and (if already available) the current content to be returned with the response.

The extension can modify or create the content to be returned. In this case the return value must be set to false. Returning this dirty flag the DGS knows that the extension provided a new content to be returned. Any further internal functionality by the DGS is thus skipped and the newly provided content from the extension is returned.

To keep the WebComposition/DGS approach as flexible as possible, extensions can be deployed at runtime. The DGS is instructed to use extension by specifying them within the web.config file.

<webComposition>

<dataGridService>

<extensions>

<extension type="WebComposition.Dgs.Extensions.SaRest.SaRestHandler,

WebComposition.Dgs.Extensions.SaRest,

Version=1.0.0.0,

Culture=neutral,

PublicKeyToken=null" />

</extensions>

...

</dataGridService>

</webComposition>

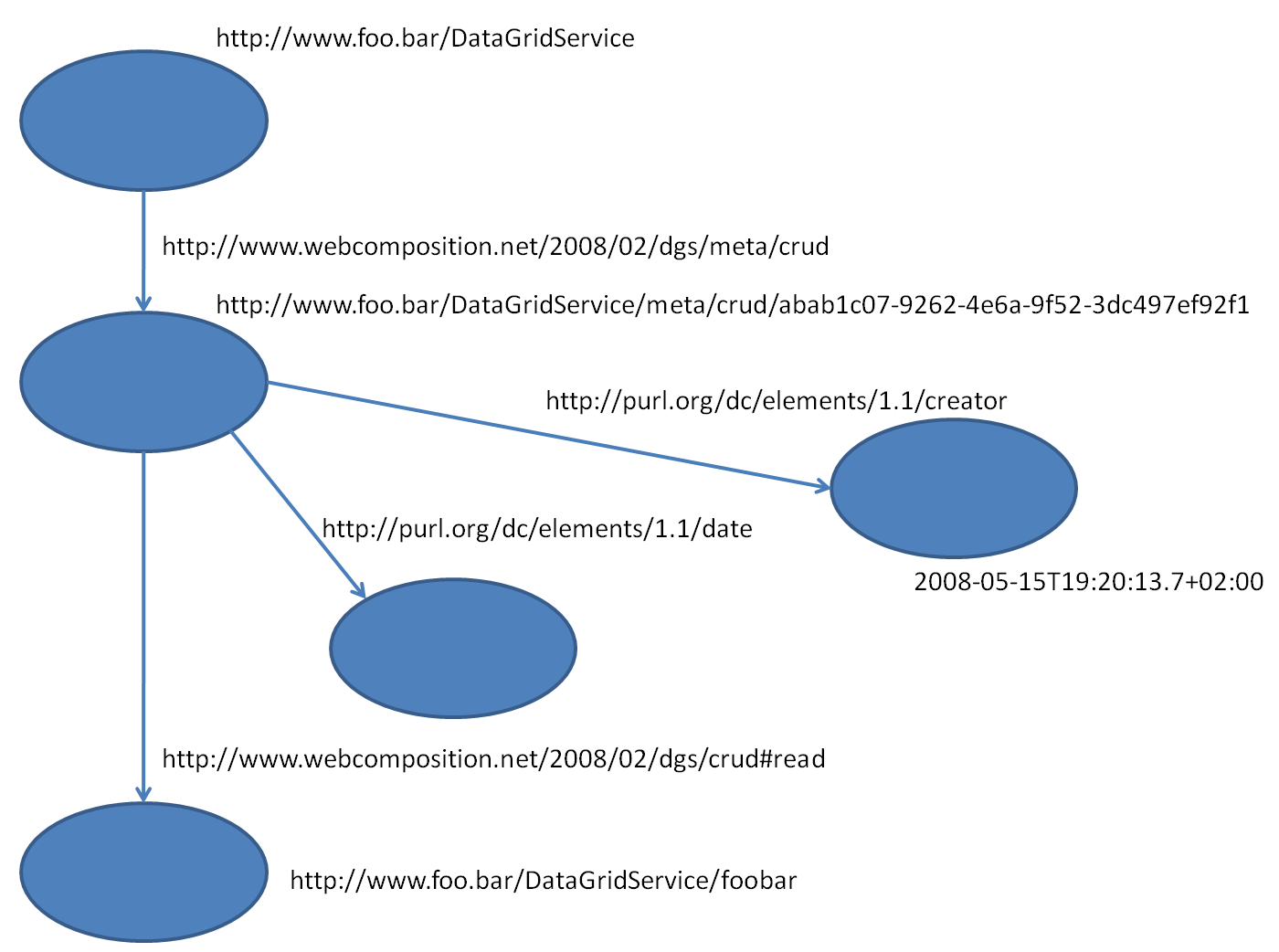

Based in this extension mechanism I created my very first extension to provide SA-REST support. If you have a closer look to SA-REST you might realize a drawback of the approach (one that is directly related to the REST principles). When using RDFa or any other microformat in HTML/XHTML the description of the service is rather static. The DGS on the other hand provides a highly flexible solution. Here, the full power of the DGS turn up. Analyzing the SA-REST annotation you will realize that you have RDF triples, e.g. metadata. This metadata can be stored within the DGS of course.

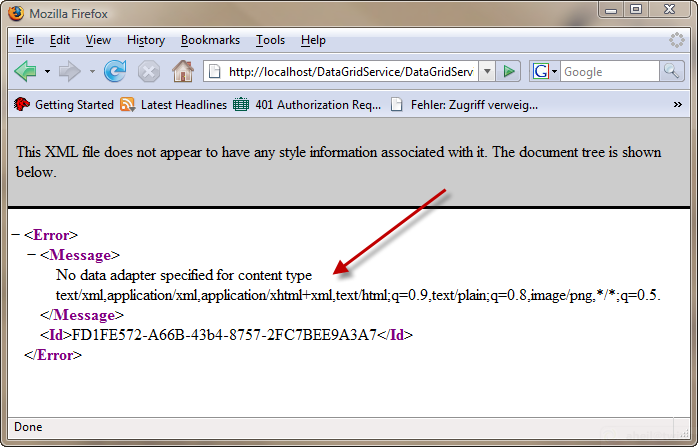

If we have a closer look on this metadata we can add this metadata by performing an updated on your service’s meta URI. In my case it is http://localhost/datagridservice/DataGridService/meta. Below we use some RDF based on the originally SA-REST example.

The SA-REST extension now extends the URI scope of the DGS – which is actually a very cool feature. Once deployed, the /meta scope is extended with /meta/sarest. If you now perform a GET request to http://localhost/datagridservice/DataGridService/meta/sarest. The extension will return the corresponding SA-REST metadata we used above.

To round up this exercise I’ve also created a set of XSL transformations that create XHTML to be used within any Web page. E.g. the SA-REST annotation mixed with the content, again based on the original SA-REST example, would look like below. Keep in mind, the XHTML snippet you see here was dynamically created by the DGS itself using a transformation.

<p about="http://localhost/datagridservice/DataGridService" xmlns="http://www.w3.org/1999/xhtml" xmlns:rdf="http://www.w3.org/1999/02/22-rdf-syntax-ns#" xmlns:sarest="http://lsdis.cs.uga.edu/SAREST#">

The logical input of this service is an

<span property="sarest:input">http://lsdis.cs.uga.edu/ont.owl#Location_Query</span>

object. The logical output of this service is a list of

<span property="sarest:output">http://lsdis.cs.uga.edu/ont.owl#Location</span>

objects. This service should be invoked using an

<span property="sarest:action">HTTP GET</span>

request.

<meta property="sarest:lifting" content="http://www.restful.ws/lifting.xsl" />

<meta property="sarest:lowering" content="http://www.restful.ws/lowering.xsl" />

<meta property="sarest:operation" content="http://lsdis.cs.uga.edu/ont.owl#Location_Search" />

</p>

The extension concept allows to add new custom components and to customize the DGS even more while the SA-REST extension provides a very first capability to describe the DGS’ RESTful interface in a semantic way.

I am going to do a second proof of concept using another approach describing RESTful services soon to show the flexibility of the extension concept.